Alphabet, the parent company of Google, has unveiled a groundbreaking advancement in artificial intelligence with its latest AI model, Gemini. Spearheaded by CEO Sundar Pichai and developed by Google DeepMind, Gemini is a game-changer in the realm of language understanding and AI capabilities.

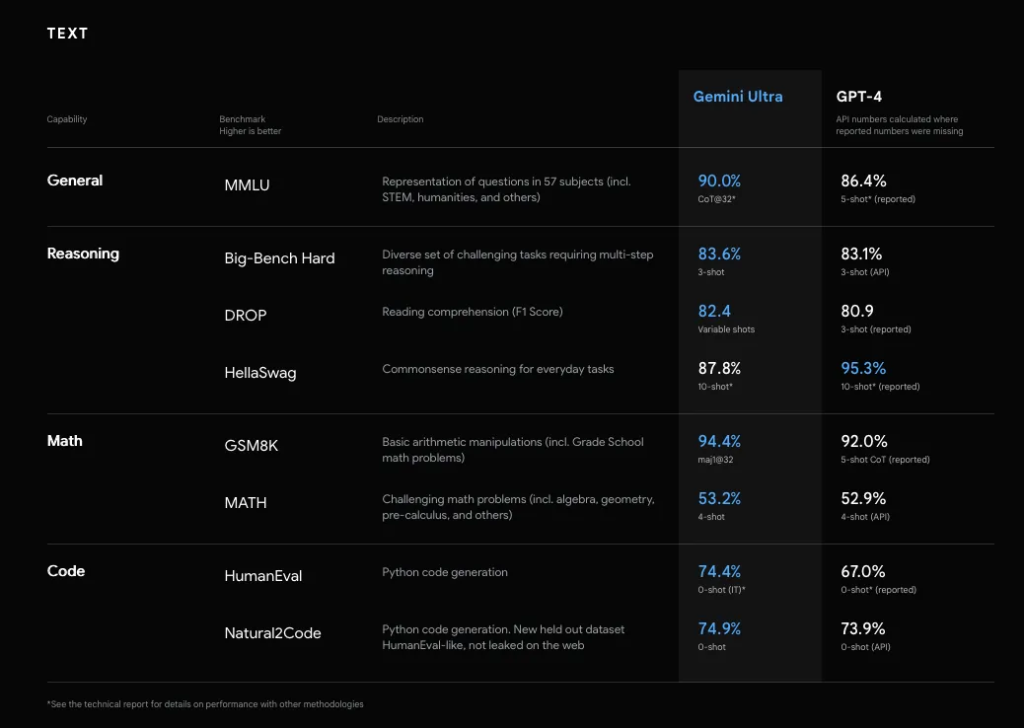

Gemini has achieved a milestone by outperforming human experts in the Massive Multitask Language Understanding (MMLU) test, a rigorous benchmark for assessing language models. This remarkable feat showcases Gemini’s ability to generate code from diverse inputs, seamlessly integrate text and images, and perform visual reasoning across various languages.

Sundar Pichai expressed his enthusiasm about Gemini’s capabilities, particularly its performance in multimodal benchmarks, which significantly exceed those of OpenAI’s ChatGPT. “Gemini has revolutionized the field, pushing the boundaries of what AI can achieve. Two years ago, the state-of-the-art performance was between 30% and 40%. Today, Gemini has crossed the 90% threshold in MMLU, surpassing the 89% mark achieved by human experts,” Pichai explained.

Gemini’s design prioritizes efficiency and scalability, making it highly adaptable for integration with existing tools and APIs. Its open-source framework encourages collaborative development within the AI community, accelerating innovation and maximizing Gemini’s potential.

The model is available in three versions: Gemini Ultra, the most extensive version; Gemini Pro, a medium-sized variant; and Gemini Nano, a smaller and more efficient model. Google’s chatbot Bard, akin to ChatGPT, will be powered by Gemini Pro. The Nano version is set to operate on Google’s Pixel 8 Pro phone.

Public reaction to Gemini has been varied, with some users reporting impressive results and others noting occasional inaccuracies. Melanie Mitchell, an AI researcher at the Santa Fe Institute, acknowledged Gemini’s sophistication but questioned whether it significantly surpasses GPT-4’s capabilities.

Gemini belongs to a family of multimodal large language models and is a successor to previous models like LaMDA and PaLM 2. Its architecture is based on decoder-only Transformers, optimized for efficient training and inference on Tensor Processing Units (TPUs). It can handle inputs of varying resolutions, from images to video sequences, and even audio, which is processed into a sequence of tokens.

Prior to its release, the Gemini team conducted thorough model impact assessments to identify societal benefits and potential risks. These assessments informed the development of model policies guiding the model’s evaluation and development. Rigorous evaluations were conducted to align Gemini with these policies and address key risk areas.

To mitigate potential safety issues and reduce instances of hallucination, the team implemented data layer adjustments, instruction tuning, and methods like attribution, closed-book response generation, and hedging. In line with Executive Order 14110 by President Joe Biden, Google has committed to sharing Gemini Ultra’s testing results with the U.S. federal government.

Developers interested in exploring Gemini further can access a detailed technical report made available by Google.