OpenAI’s efforts to improve ChatGPT with image analysis are undeniably groundbreaking. This enhancement allows for diverse and complex interactions, transforming the way we perceive and engage with AI chatbots. However, with every advancement comes a responsibility to manage it appropriately.

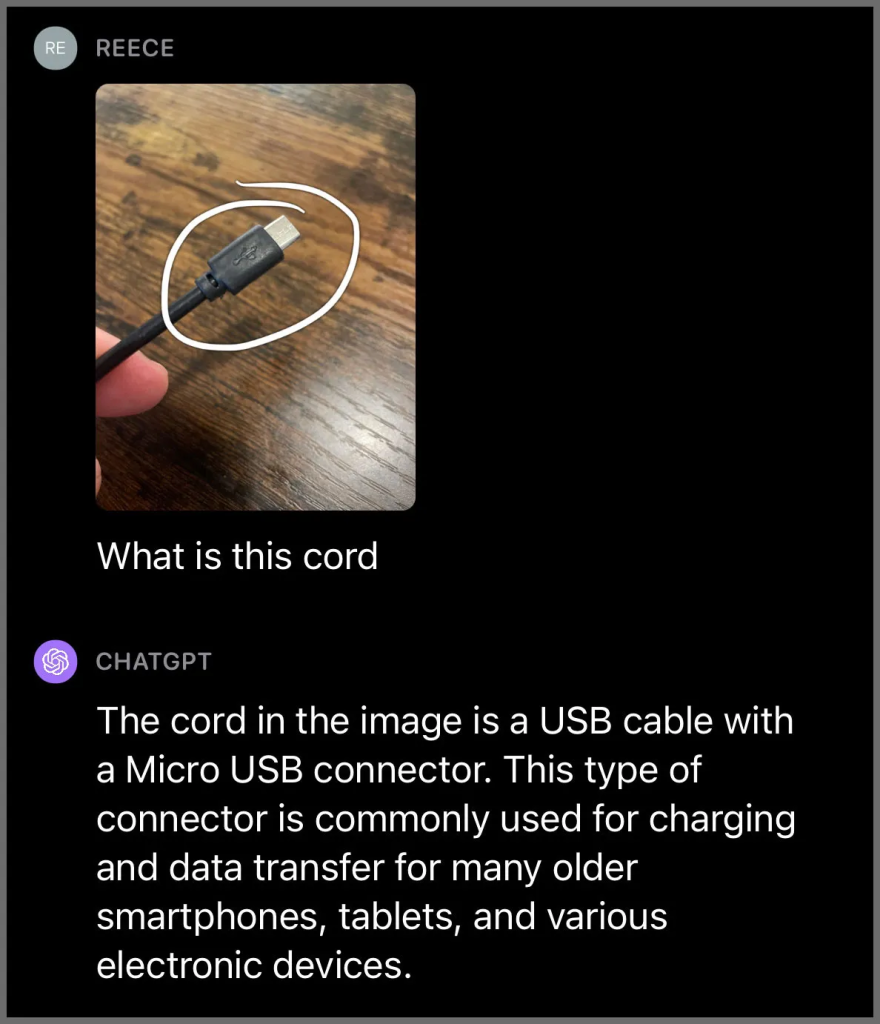

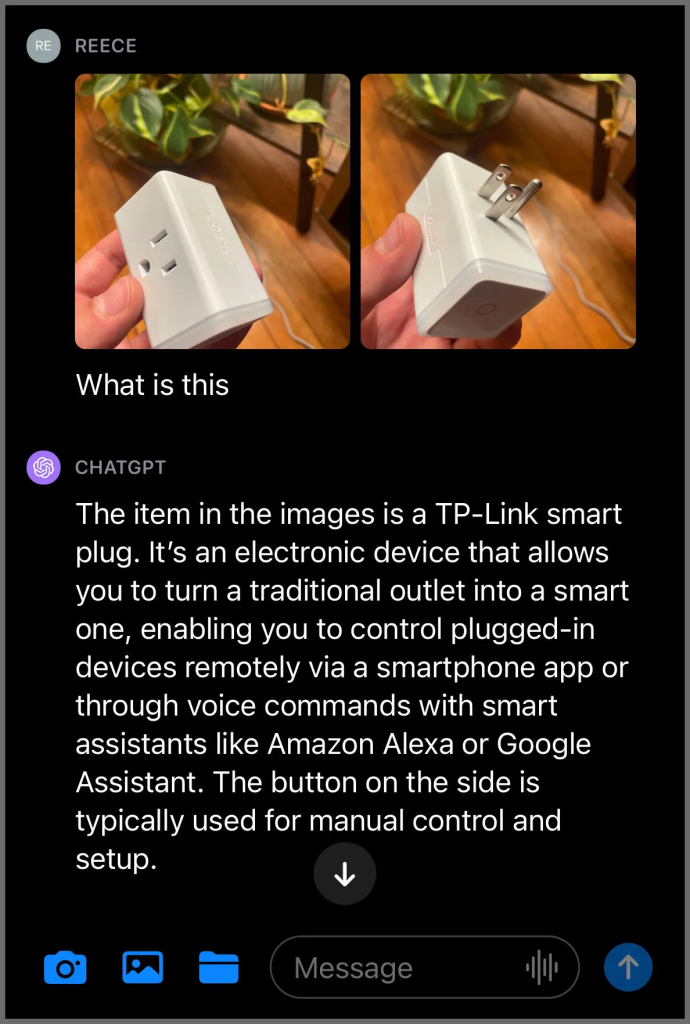

From my initial tests, the image feature does introduce some innovative functionalities, but there are clear boundaries established. OpenAI has prioritized user privacy and safety, which I experienced first-hand during my experiments. The reluctance of ChatGPT to identify real people, even celebrities, stands as a testament to OpenAI’s commitment to these values.

Yet, there were some discrepancies. In one instance, the chatbot made multiple incorrect identifications, eventually settling on the right one only after several prompts. It’s clear that while ChatGPT is mighty in its capabilities, it still has room for improvement. The system is not flawless, and users should approach it with an understanding of its current limitations.

A significant point of concern arises when considering the future, especially if these guardrails were to be bypassed in some way. The potential for misuse, such as stalking and harassment, especially towards vulnerable groups, is alarming. Therefore, while the evolution of ChatGPT’s image analysis is thrilling from a technological standpoint, it’s vital to continue emphasizing the importance of ethical use and protecting user privacy.

In conclusion, while OpenAI’s image analysis for ChatGPT is a significant step forward in the AI world, it’s essential to approach this tool with caution, understanding, and respect for its potential implications. Users should be aware of its capabilities and limitations and ensure that they are using the platform responsibly.